- Written by: Thomas Weise

Our team warmly congratulates Dr. Zijun Wu [吴自军博士] for his promotion to Full Professor [教授]. This promotion is the result of his outstanding research work, including, for instance, an article recently accepted and published online by the Operations Research journal. Together with his other publications and the funding obtained from National Natural Science Foundation of China (NSFC) [国家自然科学基金], Dr. Wu has made very important contributions to the construction of our institute. Congratulations, Zijun!

- Written by: Thomas Weise

The Institute of Applied Optimization welcomes Associate Professor Dr. Jingneng Ni [倪敬能副教授] as a new member of our team. Before joining our institute, Dr. Ni has worked as a Specialized Course Teacher in the Department of Mathematics and Applied Statistics [数学与应用统计系] of our School of Artificial Intelligence and Big Data [人工智能与大数据学院] of our Hefei University [合肥大学]. Dr. Ni has obtained his Ph.D. in Management Science & Engineering [博士] from Business School [四川大学商学院] of Sichuan University [四川大学], Chengdu [成都市], Sichuan [四川省] in 2016. He is an expert in modeling and simulation, operations research, bi-level decision making, and uncertain information processing. He has applied these technologies highly successfully to water resource management, ecosystem engineering, protection, and restoration. Several of his studies concern the ecosystem of the Chao Lake [巢湖], one of the five largest fresh water lakes in China and located very near to our city Hefei.

We are very happy that Dr. Ni joined our team. We are looking forward to working together and to exploring synergies to our research on optimization and hydrological modeling.

- Written by: Thomas Weise

Our team warmly congratulates Dr. Zhize Wu [吴志泽博士] for his promotion to Associate Professor [副教授]. This promotion is the result of his outstanding research work, which is documented in several high-quality papers, including two SCI-indexed papers in 2020 alone. Dr. Wu additionally co-supervises two graduate students and has made significant teaching contributions to our school. Congratulations, Zhize!

- Written by: Thomas Weise

Our team warmly congratulates Dr. Xinlu Li [李新路博士] for his promotion to Associate Professor [副教授]. After many hard years of work for our university, this is a well-deserved recognition. Furthermore, this year Xinlu published an excellent article, building on his track record of good research, and took on the supervision of two graduate students. He also contributes significantly to the teaching of our school. Congratulations, Xinlu!

- Written by: Thomas Weise

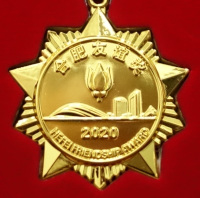

On December 16, 2020, the Hefei City Government [合肥市人民政府] organized the Award Ceremony for the Third Batch of the Hefei Friendship Award for Foreign Experts together with the Forum on Suggestions of Foreign Experts to improve our city [第三届外国专家“合肥友谊奖”颁奖仪式暨外国专家建言献策座谈会]. The symposium was chaired by Mr. Aihua YU [虞爱华 (安徽省委常委、合肥市委书记)], member of the Standing Committee of the Anhui Provincial Committee and Secretary of Hefei Municipal Party committee, and Mrs. Yun LING [凌云(安徽省合肥市委副书记、市政府市长)], the deputy secretary of Hefei Municipal Party committee and the Mayor of Hefei Municipal Government. The meeting was further guided by Mr. Wensong WANG [王文松(安徽省合肥市委常委、常务副市长)], member of the Standing Committee of the CPC Hefei Municipal Committee and Executive Vice Mayor of Hefei City; Mr. Ping LUO [罗平(安徽省合肥市人民政府党组成员、秘书长)], member of the Party Group and Secretary General of the Hefei Municipal People's Government; and Weiming WANG [市政府副秘书长汪维明], the Deputy Secretary General of the Hefei Municipal Government.

In this third batch of Hefei Friendship Awards, ten international experts from seven countries, namely Germany, Russia, Japan, the United States, Australia, and Costa Rica, and South Korea, were awarded. One of the awardees who had the great honor to receive the Hefei Friendship Award for Foreign Experts [外国专家“友谊奖”] was Prof. Dr. Thomas Weise (who had already received the Friendship Award of our district, the Hefei Economic and Technological Development Area, in 2018).