- Written by: Thomas Weise

2021 Genetic and Evolutionary Computation Conference (GECCO'21)

Lille, France, July 10-14, 2021

http://iao.hfuu.edu.cn/aaboh21

The Analysing Algorithmic Behaviour of Optimisation Heuristics Workshop (AABOH), as part of the 2021 Genetic and Evolutionary Computation Conference (GECCO'21), invited the submission of original and unpublished research papers. Here you can download the AABOH Special Session Call for Papers (CfP) in PDF format and here as plain text file.

Optimisation and Machine Learning tools are among the most used tools in the modern world with its omnipresent computing devices. Yet, the dynamics of these tools have not been analysed in detail. Such scarcity of knowledge on the inner workings of heuristic methods is largely attributed to the complexity of the underlying processes that cannot be subjected to a complete theoretical analysis. However, this is also partially due to a superficial experimental set-up and, therefore, a superficial interpretation of numerical results. Indeed, researchers and practitioners typically only look at the final result produced by these methods. Meanwhile, the vast amount of information collected over the run(s) is wasted. In the light of such considerations, it is now becoming more evident that such information can be useful and that some design principles should be defined that allow for online or offline analysis of the processes taking place in the population and their dynamics.

Hence, with this workshop, we call for both theoretical and empirical achievements identifying the desired features of optimisation and machine learning algorithms, quantifying the importance of such features, spotting the presence of intrinsic structural biases and other undesired algorithmic flaws, studying the transitions in algorithmic behaviour in terms of convergence, any-time behaviour, performances, robustness, etc., with the goal of gathering the most recent advances to fill the aforementioned knowledge gap and disseminate the current state-of-the-art within the research community.

Read more: Analysing Algorithmic Behaviour of Optimisation Heuristics Workshop (AABOH'21)

- Written by: Thomas Weise

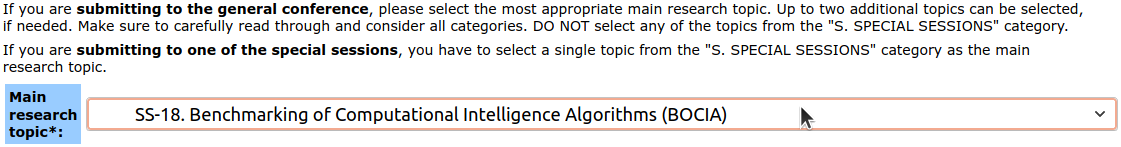

Our team co-organizes the Special Session on Benchmarking of Computational Intelligence Algorithms (BOCIA), as part of the 2021 IEEE Congress on Evolutionary Computation (CEC 2021). We are cordially inviting the submission of original and unpublished research papers. Here you can download the BOCIA Special Session Call for Papers (CfP) in PDF format and here as plain text file. The IEEE CEC 2021 online submission system is now open, and it is possible to submit papers to our special session by selecting "SS-18. Benchmarking of Computational Intelligence Algorithms (BOCIA)" as Main Research Topic.

- Written by: Thomas Weise

The Institute of Applied Optimization welcomes Dr. Rongwang Yin [殷荣网博士], who today has joined our team as researcher. Before joining our institute, he finished his PhD research at the School of Engineering Science (SES) [工程科学学院] of the University of Science and Technology of China (USTC) [中国科学技术大学] in Hefei [合肥], Anhui [安徽], China [中国]. Dr. Yin is an expert in applying optimization and AI technologies to problems in the petroleum industry, such as identifying multistage fracturing horizontal well parameters and physical reservoir properties, but has also contributed work to renewable energies and wireless network communication.

We are very happy that Dr. Yin joined our team. We are looking forward to working together on his exciting research topics.

- Written by: Thomas Weise

Today, December 2nd, 2020, marks the 40th Anniversary of our Hefei University [合肥学院]. In 1980, our uni was founded under the name Hefei United University [合肥联合大学] was founded by the Secretary of the Hefei Municipal Party Committee Rui ZHENG [合肥市委书记郑锐] and the Vice President of USTC, Mr. Chengzong YANG [中国科大副校长杨承宗]. In 2002, Hefei United University merged with the Hefei Institute of Education [合肥教育学院] and Hefei Normal School [合肥师范学校] to Hefei University [合肥学院] with the approval of the Ministry of Education [育部批]. Since its establishment, our university has implemented the concepts of locality, application-orientation, and internationalization [“地方性、应用型、国际化”]. The Chinese-German collaboration always played a large role in the development of our university, which spearheaded the adaptation of German concepts for application-oriented education such as the dual system of cooperative education [双元制合作教育] to Chinese needs. With the support of both the Chinese and the German government and inspired by the meeting of the German Chancelor Dr. Angela Merkel and the Chinese Premier Keqiang LI [李克强 (国务院总理)] in our university, the Demonstration Base for Sino-German Educational Cooperation [中德教育合作示范基地] was launched here in 2015. Today, our university has more than 17'000 students and 1000 teachers. All of us are thankful for the foundation laid by 40 years of hard work by the teachers, students, administration staff, and service workers of our university. We will try our best to keep improving our Hefei University for a long and successful future. Happy Birthday, Hefei University!

13. Deutsch-Chinesisches Symposium zur Anwendungsorientierten Hochschulausbildung [第十三届中德应用型高等教育研讨会]

- Written by: Thomas Weise

Today, on December 1st, 2020, the "13. Deutsch-Chinesisches Symposium zur Anwendungsorientierten Hochschulausbildung" [第十三届中德应用型高等教育研讨会], i.e., 13th Chinese-German Symposium on Application-Oriented University Education, took place in Hefei [安徽省合肥市]. The location of the event is switched yearly between Hefei and Osnabrück (Germany) and it is always co-organized by Hefei University [合肥学院] and the Hochschule Osnabrück [奥斯纳布吕克应用科学大] under guidance of the Ministry of Education of the Province Anhui, China and the Ministry for Science and Culture of Lower Saxony, Germany. The topic this year was Smart-Learning, Industrie-Lehre-Integration und Hochwertige Anwendungsorientierte Hochschulausbildung, i.e., Smart-Learning, Industry-Education-Integration, and High-Quality Application-Oriented University Education. Due to this year's special situation, the symposium was condensed to a single day. Nevertheless, it featured many highly interesting talks given by university professors and educational leaders both from China and Germany. After the opening ceremony and five insightful keynotes, three parallel sessions were held. German and Chinese professors took turns in giving insightful presentations in two of the sessions. In the third session, the presidents of several universities exchanged their perspectives and ideas. The meeting can be considered as highly successful and had a high attendance. The integration of presence talks and web-based presentations was smooth and seamless, allowing for the full participation of experts who could not attend the meeting physically. For thirteen years now, this symposium has significantly influenced the development of application-oriented university education in both China and Germany. Next year, its 14th iteration will take place in Osnabrück and I am very much looking forward to it.

- Lorentz Center Workshop "Benchmarked: Optimization Meets Machine Learning" with Breakout Session organized by Prof. Weise

- Special Session on Benchmarking of Computational Intelligence Algorithms (BOCIA'21)

- Welcome to Four New Graduate Students

- Prof. Weise Attends the Hefei Mid-Autumn Tea Party for People from All Walks of Life [合肥市各界人士国庆中秋茶话会]